meta data for this page

Methods to collect E-Mails

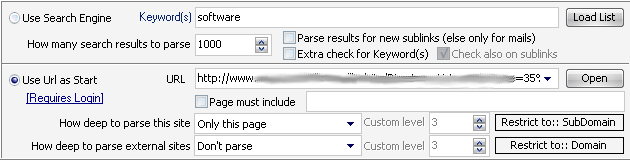

Use Search Engines

Use this option if you want find E-Mails from people who have some specific relation to the entered keyword(s). You can also enter the number of results to parse that the search engine delivers (1000 is the maximum as most search engines will not deliver more than that). The option „Parse results for new sublinks“ will parse the found website also for sublinks and may then also find a „Contact“ website for that homepage.

The option „Extra check for Keyword(s)“ will check if the found webpage does really have the entered keyword on it. This option should be enabled in order to prevent false-positive results from the search engine.

Use URL as Start

Use this option if you have already a website where you want to extract emails from.

The option „Page must include“ will check the downloaded website for this keyword and will only continue to parse if the keyword is present on the website.

How deep to parse this site

This will let you choose how this URL gets parsed and how deep. All links from that same domain get seen as a certain level of sublink from that site.

How deep to parse external sites

Each link that leaves the entered sites domain is seen as an external site. E.g. you want to parse site https://www.gsa-online.de and the program finds a link to https://www.google.de then this site is the first level of the external site.

Restrict to:: Domain/SubDomain

A domain is e.g. gsa-online.de where a sub-domain is email.spider.gsa-online.de. Whatever you plan to parse you can restrict it to one or the other setting.

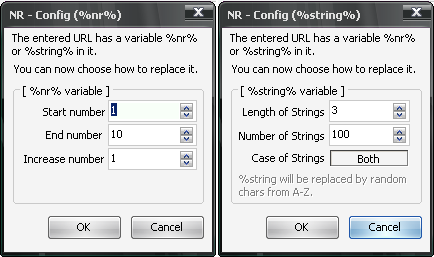

Place holders

You can use a special place holders or variable in the URL field. When entering %nr% or %string% inside of the URL you will be able to define a range of values on how to replace it.

If you enter e.g. http://www.some-site.com/?page=%nr%, then the program will replace %nr% with numbers that you define. It will add then multiple URLs to parse. Same for the place holder %string%.

%nr% stands for “number” and will insert a number counting from start number to end number that you define, where %string% will insert a random generated string. The result are generated URLs that are all getting parsed by the program. You don't need %string% in most cases. Just %nr% is interesting for you as it can be used to speed things up when you found an URL that holds all the data you need and uses a parameter that is a number. Usually this is a database ID.

Login Required Websites

Some websites require you to enter a login and password to get to a certain point of interest. There are basically two types to login.

Auth-Http-Header

This method is a server based authorization and your browser asks you to enter a login/password before it opens the website. If this is the case, you can use the following format when entering the URL:

http://login:password@www.some-site.com/page.html

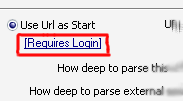

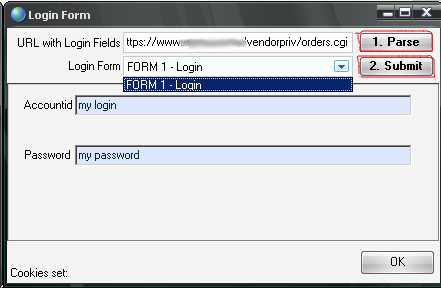

Website-Form

If you have to open a special website to enter a login and password followed by pressing a button on the page, you have to click on the label “Requires Login” as seen in the screenshot below.

A new form opens where you can simulate the login.

- Enter the URL click on 1. Parse

- Choose the login form in the box below (usually detected automatically)

- Fill the fields with login/password

- Click on 2. Submit

A page should open in your browser and hopefully showing some indication that the login was successful. Usually you should see a “Logout” link or button or some welcome message.